CLUSTER 6.6 — Assessment Without Tests: The New Measurement Layer

URL: /education/future-learning-infrastructure/assessment-without-tests/

---

Generative AI broke the standardized assessment model. Multiple-choice tests, essay assessments, take-home assignments, even some forms of in-class examination — all of these face credibility questions when AI can produce student-quality work in seconds. The institutions responding are rebuilding assessment around process, demonstration, and applied competency rather than output measurement.

What broke

The traditional assessment model measured what students produced. When AI can produce student-quality output, output measurement no longer reliably measures student learning. Detection technology is unreliable. Prohibition is unenforceable. Restoration of the pre-AI assessment paradigm is not possible.

What replaces output measurement

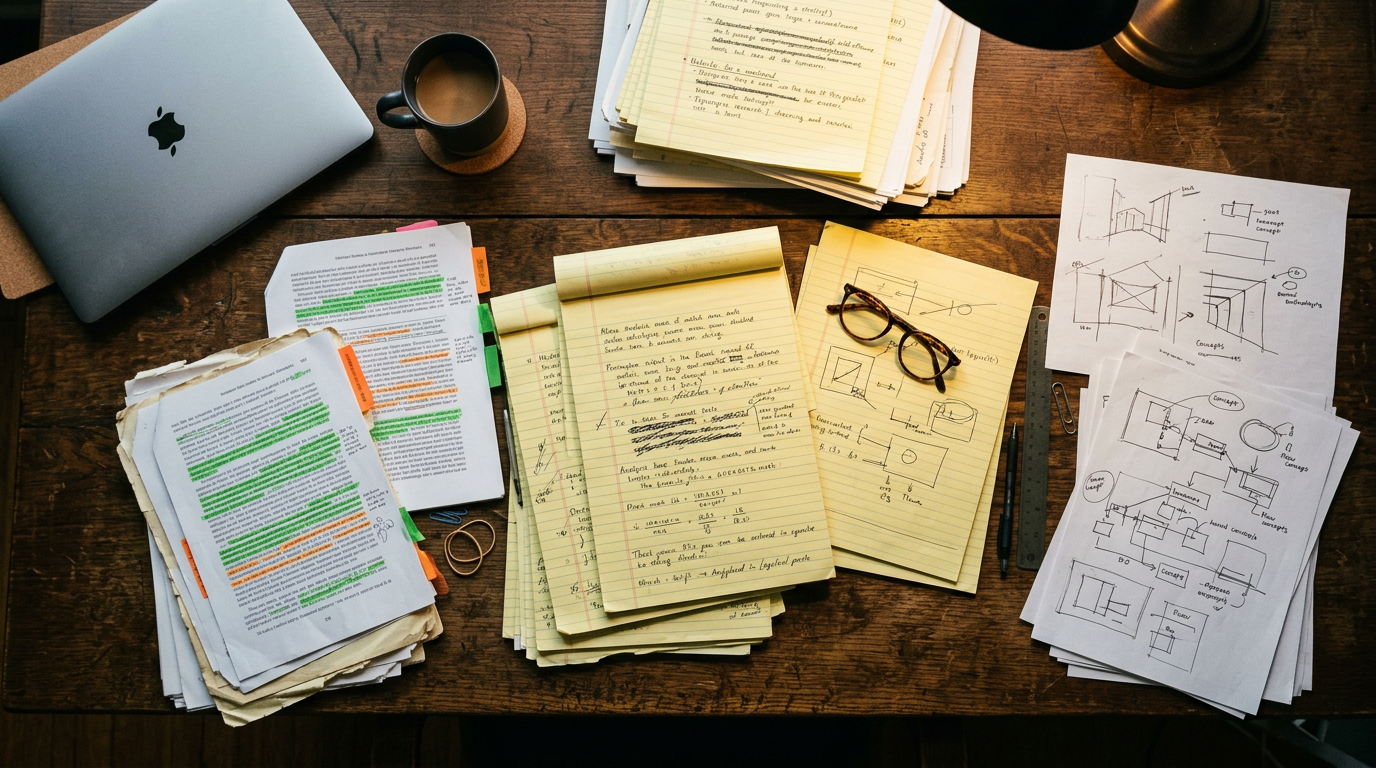

Process documentation. Students document the process by which they produced work — drafts, decisions, sources, iterations. Process is harder to fabricate than output.

Oral examination. Defense of written work, discussion of process, demonstration of understanding through dialogue. Returns to a much older assessment tradition with new AI-era relevance.

In-class assessment with new design. In-class writing, problem-solving, presentation. AI use limited or impossible during the assessment itself.

Project-based assessment. Extended projects with multiple checkpoints, faculty engagement throughout, and demonstration components.

Applied competency demonstration. Students demonstrate skills in applied contexts — labs, clinical experiences, fieldwork, simulations.

Peer assessment and discussion. Student dialogue with peers, evaluated for quality of engagement and understanding.

What the new assessment paradigm requires

Assessment design support. Faculty designing AI-resistant assessment need institutional support, training, and time investment.

Course redesign. Many courses require structural redesign to accommodate new assessment models. Adjustments cannot be tactical.

Faculty capacity. Process documentation, oral examination, and project-based assessment require more faculty time per student than output measurement. Capacity constraints become binding.

AI augmentation of faculty capacity. AI classroom assistants can multiply faculty assessment capacity. The same AI that broke output measurement enables the new measurement paradigm at scale.

Student adaptation. Students raised on output-measurement assessment must adapt to new models. Cultural and skills transition takes multiple cohorts.

Institutional infrastructure. Assessment platforms, gradebook structures, and learning analytics must support the new measurement layer.

What this means for accreditation and outcomes reporting

Accreditors increasingly expect institutions to demonstrate student learning, not just student credit accumulation. The new assessment paradigm supports better learning outcomes reporting than traditional models did. Institutions that build the infrastructure are positioned for accreditation evolution. Institutions that don't will struggle with both authentic assessment and accreditation reporting.

What faculty are learning

The faculty members rebuilding assessment around process, demonstration, and applied competency report two consistent observations. The new assessment is more time-intensive per student. The student learning observable through the new assessment is substantially deeper than what output measurement captured.

The institutions that have invested in the assessment transition are producing demonstrable learning gains. The institutions that have not are accumulating credibility problems — with students, with accreditors, and increasingly with employers who note credential inflation.

---