CLUSTER 6.11 — Multimodal Learning AI: Voice, Video, Vision

URL: /education/future-learning-infrastructure/multimodal-learning-ai/

---

Multimodal AI — systems that process and generate voice, video, and visual content alongside text — has expanded the surface of AI-enabled learning in ways that text-only systems could not. The category is reshaping what AI can do in instruction, assessment, accessibility, and learning support.

Where multimodal AI is reshaping learning

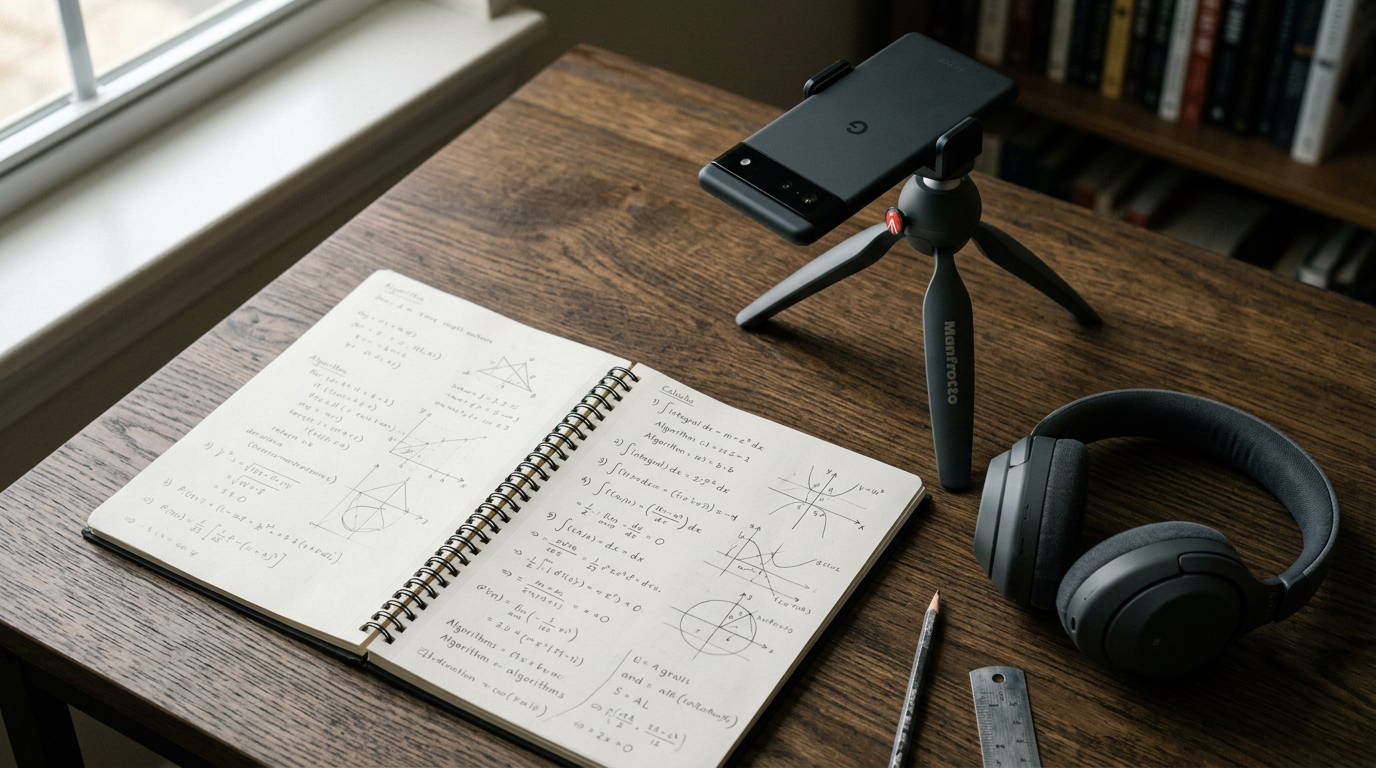

Vision-enabled tutoring. AI systems that can see student work — handwritten math, drawings, lab notebook entries, code diagrams — and respond to it. Substantially expands tutoring applicability in subjects where work is visual rather than typed.

Voice-enabled language learning. AI conversation in target languages with feedback on pronunciation, fluency, and comprehension. Substantially improves practice volume in language acquisition.

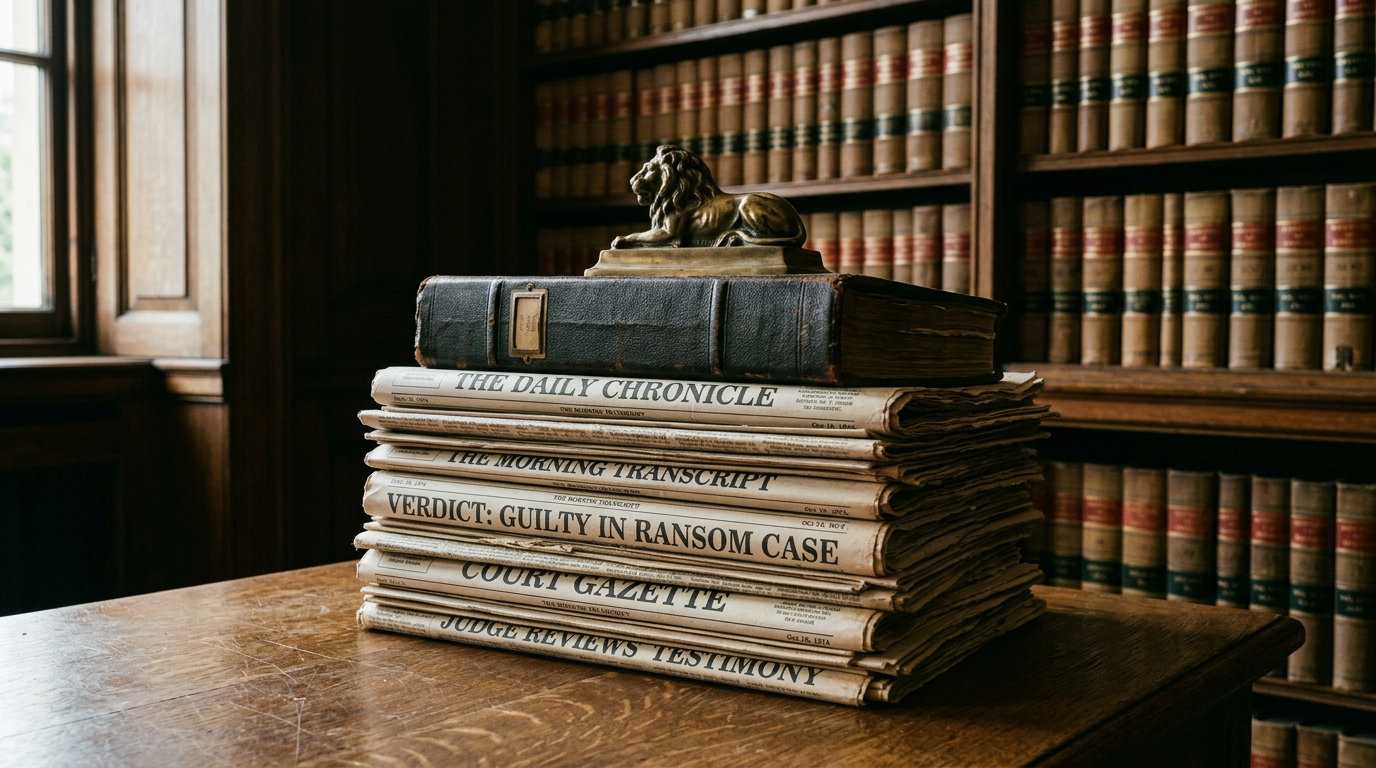

Video-based simulation. Professional education simulations involving video — clinical examination, courtroom presentation, classroom teaching — with AI evaluation and feedback.

Multimodal assessment. Assessment that combines spoken explanation, visual demonstration, and written analysis. Returns to assessment models that better measure learning than text-only assessment.

Accessibility expansion. Voice interfaces for students with visual or motor impairments. Visual interfaces for students with hearing impairments. Real-time language translation in multimodal contexts.

Lab and field instruction. AI systems that can observe and provide feedback on physical experimental work, fieldwork observations, and applied practice.

What multimodal deployment requires

Hardware infrastructure. Cameras, microphones, computing capacity. Often available; sometimes requires institutional investment.

Privacy and consent infrastructure. Voice and video raise privacy considerations beyond text. FERPA, state privacy law, and student consent frameworks must address multimodal use.

Accessibility considerations. Multimodal interfaces serve some accessibility needs and create others. Alternative paths required.

Pedagogical alignment. Multimodal AI works best when integrated with pedagogical frameworks rather than deployed as technology features.

Faculty training. Faculty using multimodal AI need training in pedagogical integration and student support.

Vendor evaluation. Multimodal AI products vary widely in quality, privacy posture, and integration depth.

Where the category is heading

Multimodal AI will likely become baseline expectation for learning infrastructure by the late 2020s. The institutions deploying multimodal capability now are positioning for the category. The institutions that limit AI deployment to text-only systems are missing the most significant pedagogical opportunities the technology offers.

What's still uncertain

Privacy norms. Voice and video raise privacy considerations that institutional posture is still developing.

Cost and scaling. Multimodal AI is more computationally expensive than text-only. Scaling economics are still developing.

Accuracy in specialized domains. Multimodal AI accuracy varies by domain. Specialized medical, legal, and technical contexts may have accuracy limitations that institutional deployment must address.

Faculty practice. Faculty patterns for multimodal AI use are emerging. Best practices are not yet established.

The multimodal AI category is early enough that institutional posture and faculty practice will substantially shape how the technology integrates with learning. The institutions that engage early shape the practices. The institutions that wait inherit practices shaped by others.

---