CLUSTER 2.10 — Student Search Behavior Inside ChatGPT and Perplexity

URL: /education/admissions-marketing-ai-era/search-behavior-chatgpt-perplexity/

---

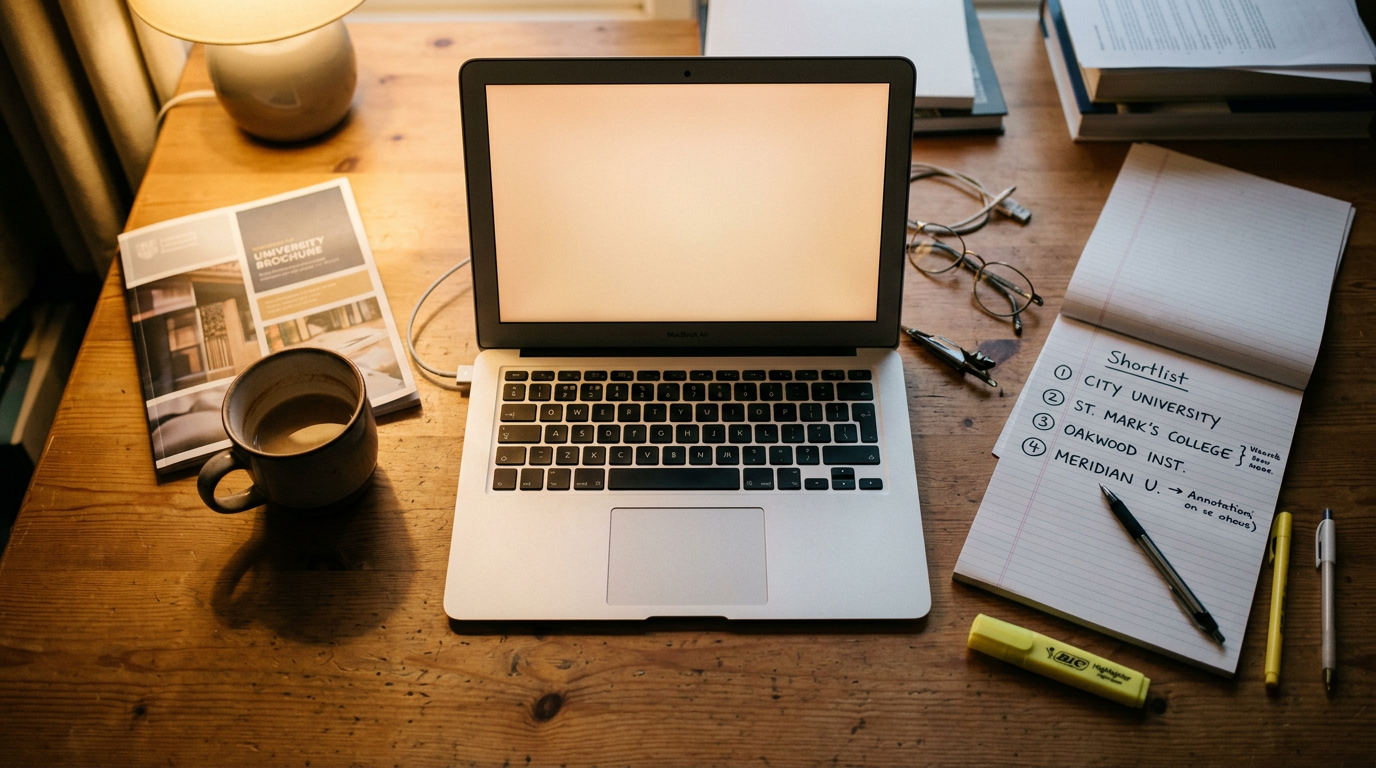

Prospective students are using ChatGPT and Perplexity differently than they use Google — and universities optimized for Google search alone are missing the shortlist conversation.

The behavioral patterns are now well-documented. AI engine searches are longer, more conversational, more multi-turn, and more comparative than Google searches. They are also more decisional — the prospect is often using the engine to actively narrow a list, not just gather information.

What prospects ask AI engines

Five dominant query patterns.

Category discovery. "What should I study if I'm interested in psychology and computer science?" Prospects use AI to map fields of study before they map institutions.

Comparative shortlist generation. "What are the best small liberal arts colleges for environmental science in the Northeast?" The query produces a named shortlist of three to seven institutions. The institutions on that list win the shortlist contest. The ones not on it are absent from the prospect's consideration set.

Outcomes verification. "What is the job placement rate for engineering graduates at [institution]?" Prospects verify outcomes data through AI engines that aggregate across multiple sources.

Financial aid scenario modeling. "What is the average net price for a family earning $90,000 at [institution]?" Prospects use AI to model financial scenarios before they apply.

Cultural and lifestyle fit. "What is the social scene like at [institution]?" Prospects use AI to triangulate cultural fit across multiple data sources — including Reddit, Niche, and student-generated content.

What this means for admissions marketing

Three operational priorities.

1. Category-level visibility. The institution needs to appear in AI engine responses to the category prompts its target prospects use. Not just institutional prompts. Category prompts are the front door.

2. Outcomes data infrastructure. Public, machine-readable outcomes data — employment rates, median earnings, graduate school placement, program-specific outcomes — gets pulled into AI engine responses. Institutions that publish this data first and structure it for retrieval win comparison queries.

3. Net-price transparency. Net price calculators that work, financial aid scenario pages with clear examples, and structured pricing information. AI engines surface this content. Institutions that hide pricing data lose to those that publish it.

The strategic priority

Treat AI engines as a recruitment market in their own right. Measure Citation Share by category. Optimize the retrieval anchors that move it. Track quarterly. Adjust the content strategy against the data.

The institutions running this discipline are appearing on shortlists peers are not aware exist. The ones that aren't are watching their visibility erode without knowing where the prospects went.

---