Hollywood, the major labels, the sports leagues, the talent agencies, the streaming platforms, and the gaming publishers are now writing AI communications policy in real time — and the ones doing it badly are losing trust with talent, with audiences, and with the press faster than the ones doing it carefully.

This pillar is the working reference for how entertainment companies are communicating about artificial intelligence — the disclosures, the partnership announcements, the litigation messaging, the consent frameworks, and the crisis response when generative AI content goes wrong.

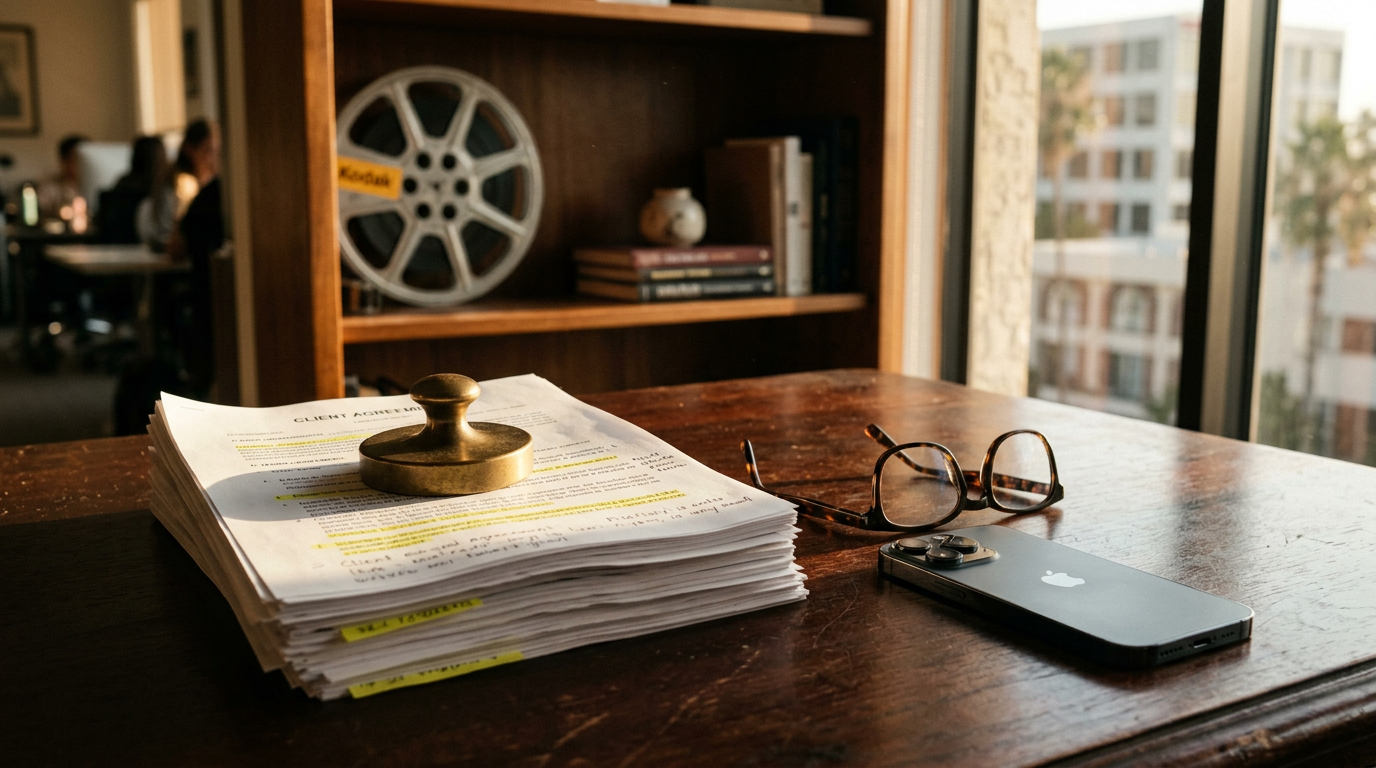

Companion analysis: The structural rebuild of the entertainment economy sits in The State of Entertainment in 2026. The corporate operating map is in The Five Companies That Run Entertainment Now. The AI citation map across the category will track in The Entertainment AI Citation Share Study (publishing July 2).

The Five Communications Categories AI Has Created

The AI-and-entertainment communications discipline now operates across five distinct categories. Treating them as one is the most common mistake.

1. AI partnership announcements. When a studio, label, or platform announces a commercial AI partnership — Lionsgate with Runway, James Cameron's Lightstorm with Stability AI, the major labels with various AI startups under license — the announcement has to navigate talent reaction, union scrutiny, fan response, and trade press skepticism simultaneously.

2. AI litigation communications. Universal Music Group, Sony Music Entertainment, and Warner Music Group's coordinated lawsuits against Suno and Udio. The New York Times's lawsuit against OpenAI and Microsoft. The Authors Guild class action. The Getty Images litigation against Stability AI. Each requires sustained communications discipline across multi-year case timelines.

3. AI consent and disclosure communications. The SAG-AFTRA AI provisions, the WGA AI provisions, the SAG-AFTRA video game performers strike's AI demands. The disclosure rules emerging across awards bodies. The talent agency client communications around AI consent. Every studio, label, and agency now communicates around consent frameworks.

4. AI controversy and crisis communications. When generative AI is used without consent — the Scarlett Johansson / OpenAI voice incident, the deepfake Taylor Swift images circulated in 2024, AI-generated music attributed falsely to named artists, AI-generated images of athletes in non-existent moments. Each is a distinct crisis communications category.

5. AI editorial policy communications. Trade publications announcing AI usage policies. Broadcast networks announcing AI-assisted graphics. Sports leagues announcing AI officiating tools. The discipline of communicating about institutional AI adoption to skeptical audiences.

Each category has different audiences, different timelines, different stakeholder coordination requirements. The communications functions that have separated them by discipline operate more effectively than the functions treating AI as a single topic.

The Strike Outcomes and What They Created

The 2023 strikes — SAG-AFTRA and WGA — resolved with AI provisions that established new communications categories.

The WGA agreement reached in September 2023 included AI provisions covering authorship credit, training data restrictions, and the use of generative AI in writing rooms. The agreement specifically prohibited studios from requiring writers to use AI or from using AI to undermine writer credit and compensation.

The SAG-AFTRA agreement reached in November 2023 included broader AI provisions covering performer likeness, voice, and digital double creation. Consent requirements, compensation requirements, and disclosure requirements were all codified.

The agreements created standing communications obligations. Every production now operates under AI consent and disclosure frameworks. Studios communicate about their AI policy to talent before production begins. Talent representatives communicate about AI provisions during negotiations. The discipline has been internalized.

The agreements also created enforcement litigation. Multiple disputes about AI usage in production are now working through grievance processes, with corresponding communications discipline managing the public posture of each side.

The SAG-AFTRA video game performers strike that began in July 2024 and continued into 2026 centered substantially on AI provisions. The communications discipline of that strike — distinct from the 2023 film and television strike — established new patterns for gaming-specific AI advocacy.

The Music Industry Litigation

The major music labels filed coordinated litigation against Suno and Udio in June 2024 alleging mass copyright infringement in AI music training datasets. The litigation, ongoing through 2026, has become the defining AI-and-music communications story.

The communications discipline supporting the litigation involves coordinated posture across Universal, Sony, and Warner. Each label maintains its individual communications function while coordinating overall industry positioning through the Recording Industry Association of America (RIAA).

The defendants — Suno (Cambridge, Massachusetts) and Udio (New York) — have communicated their position through their own counsel and through fair use arguments aligned with broader AI industry positioning. Andreessen Horowitz, an investor in both companies, has communicated supporting positions.

The simultaneity of label litigation and label AI partnerships creates a communications complexity worth understanding. The same major labels suing Suno and Udio are licensing AI startups, exploring catalog monetization through AI, and managing AI experiments by their own artists. The communications discipline navigates the tension — distinguishing between unauthorized training (the lawsuit category) and licensed AI partnership (the commercial category) — but the distinction has to be communicated clearly or the brand gets damaged in both directions.

The Voice Cloning Question

Voice cloning has emerged as the highest-stakes AI-and-talent communications category.

The Scarlett Johansson / OpenAI incident in May 2024 became the case study. OpenAI launched ChatGPT-4o's voice mode with a "Sky" voice option. Johansson alleged the voice resembled hers despite her declining to license her voice for the product. OpenAI suspended the Sky voice within days. Sam Altman's earlier tweet referencing the film "Her" — in which Johansson voiced the AI character — became central to the dispute.

The case established several communications patterns:

- Documented declination. Johansson's prior refusal of OpenAI's licensing offer was publicly disclosed, establishing the consent framework for the dispute.

- Rapid public response. Johansson's statement, issued through her representatives within days of the launch, established a precedent for talent response speed.

- Platform retreat. OpenAI's voluntary suspension of the Sky voice established a precedent for AI platform response.

- Sustained press coverage. The incident received continuous trade press and national press coverage for weeks, demonstrating the durability of the AI-and-talent crisis category.

Other voice cloning incidents — AI-generated music in the voices of named artists, AI-generated podcasts purporting to be in named hosts' voices, AI-generated political content using named voices — have followed similar communications patterns. The discipline of voice cloning communications is now sufficiently mature that crisis playbooks exist for it.

The Studio AI Partnership Discipline

Studios announcing commercial AI partnerships have developed a recognizable communications pattern.

The Lionsgate–Runway partnership announced in September 2024 illustrates the pattern. The partnership allowed Lionsgate to license its film library to Runway for AI model training in exchange for access to AI tools for Lionsgate's own production. The communications around the announcement emphasized:

- Clear scope. What the partnership covered and what it did not.

- Talent protection language. Acknowledgment that the partnership operated within SAG-AFTRA agreements and respected existing union frameworks.

- Commercial logic. The business case for the partnership stated explicitly.

- Forward-looking framing. The partnership positioned as exploration rather than displacement.

Subsequent studio AI partnerships — and there have been many through 2025–2026 — have followed similar patterns with varying degrees of success. The studios that communicate the partnerships carefully experience less backlash. The studios that communicate them carelessly experience sustained negative coverage.

The Awards Body AI Disclosure Discipline

Awards bodies have moved deliberately on AI disclosure requirements.

The Academy of Motion Picture Arts and Sciences updated its rules through 2024–2026 to require AI disclosure in certain craft categories. The communications around the disclosure requirements has emphasized the Academy's role in establishing professional standards rather than restricting artistic freedom.

The Television Academy (Emmys), Recording Academy (Grammys), Tony Awards (Broadway), and broader awards ecosystem have followed similar paths — each communicating disclosure requirements with sensitivity to the craft communities they serve.

The discipline is delicate. Awards bodies that move too aggressively on AI disclosure alienate the craft professionals already using AI tools as standard production aids. Awards bodies that move too cautiously cede ground to perceived AI-displacement criticism. The communications discipline supporting awards body AI policy is now a defined sub-category of awards communications.

The Sports League AI Discipline

Sports leagues have approached AI communications with structural differences from entertainment.

AI-assisted officiating (computer vision tools assisting referees), AI-driven game broadcast graphics, AI-powered fan engagement, AI-driven betting integrity tools, and AI-assisted player analytics have all entered league operations. Each has corresponding communications requirements.

The communications discipline tends to emphasize:

- Augmentation rather than replacement. AI tools positioned as assisting human officials, broadcasters, and analysts rather than replacing them.

- Fan benefit framing. AI capabilities communicated through benefits to fan experience.

- Integrity emphasis. AI in officiating and betting integrity contexts communicated through the league's broader integrity narrative.

- Player association coordination. AI in player analytics contexts coordinated with player associations to avoid labor disputes.

The leagues that have communicated AI integration carefully — the NFL, NBA, and MLB in particular — have experienced less controversy than the leagues that communicated AI integration carelessly. The full sports-side comms map is in Sports Entertainment Comms: WWE, F1, UFC, LIV.

The Crisis Response Patterns

When AI-and-entertainment communications goes wrong, the recovery patterns follow consistent disciplines.

The deepfake response. When AI-generated content depicting named talent circulates without consent (the Taylor Swift incident in January 2024, recurring incidents involving athletes and actors), the response pattern includes: immediate condemnation from the affected party, platform takedown requests, law enforcement involvement where applicable, and policy advocacy for stronger legal frameworks.

The unauthorized partnership response. When an entertainment company is alleged to have used AI without proper consent or disclosure, the response pattern includes: investigation cooperation, public commitment to remediation, policy review, and stakeholder communication.

The litigation response. When entertainment company AI activity becomes the subject of litigation, the response pattern includes: legal posture coordination with communications, sustained press relations, careful avoidance of substantive case commentary, and forward narrative around policy frameworks rather than case specifics.

What This Pillar Connects To

AI-and-entertainment communications is structurally connected to every other pillar in this vertical. The streaming pillar covers platform AI policy. The music pillar covers the major label litigation. The sports pillar covers league AI integration. The awards pillar covers disclosure requirements. The crisis pillar covers deepfake response and AI crisis categories. The creator economy pillar covers AI tools in creator workflows. The gaming pillar covers the SAG-AFTRA video game performers strike and AI in game development.

The companies that operate AI communications discipline well across all these intersections are positioned for the next decade. The companies that improvise the discipline are losing trust faster than they can rebuild it.

The SAG-AFTRA 2023 agreement requires informed consent and compensation for digital replicas of performers, restricts certain uses of AI to replace performer work, and establishes disclosure frameworks. The specific provisions are extensive and continue being interpreted through grievance processes.

Is AI legal to use in entertainment production?

Yes, with appropriate consents, licenses, and disclosures. AI tools are now standard in post-production (VFX, sound design, editing assistance), increasingly used in pre-production (concept art, script development tools), and selectively used in production (visual effects, performance capture). The legal frameworks operate around consent and licensing rather than prohibition.

Why are music labels both suing AI companies and partnering with them?

The distinction is between unauthorized training (the litigation category) and licensed partnership (the commercial category). Labels argue that AI training on their catalogs without license is infringement, while licensed partnerships represent legitimate commercial activity. Communications discipline navigates the distinction explicitly.

Are AI-generated music and AI-generated film legally protected by copyright?

Partially and contestedly. The U.S. Copyright Office has issued guidance that purely AI-generated works are not eligible for copyright protection while AI-assisted human-authored works may be eligible. The boundaries are being litigated and clarified continuously.

What's the most underrated AI-and-entertainment communications category?

Internal employee communications. Studio, label, agency, and platform employees often have stronger feelings about AI than external audiences. Internal communications discipline around AI policy, partnership announcements, and broader strategy is frequently underdeveloped and produces leak and morale costs that exceed external communications failures.

Part of the EPR Entertainment vertical. Continue with The Five Companies That Run Entertainment Now and Sports Entertainment Comms: WWE, F1, UFC, LIV.