The brochure is dead. The campus tour still works. Everything in between — Google, US News, college fairs, glossy mailers, recruiter emails — is being collapsed into a single prompt typed into ChatGPT.

Eighteen-year-olds are not searching the way their parents did. They're asking AI engines: "What's the best computer science program if I want to work at a hedge fund?" "Is Vanderbilt worth $90,000 a year?" "Compare NYU Stern to Wharton for undergrad finance." The answer they receive — generated by ChatGPT, Claude, Perplexity, Gemini, or Google AI Overviews — is now the first impression of a university. The campus visit comes after.

Higher education has spent twenty years optimizing for Google. The discovery layer has moved. Most universities haven't.

The Structural Shift

Three numbers frame the problem. OpenAI reports more than 800 million weekly ChatGPT users globally as of 2025. Google AI Overviews now appears on a meaningful share of US informational queries, with enrollment-relevant prompts among the most likely to trigger them. Perplexity is positioning itself as the research engine for high-intent decisions — including college choice.

The economics underneath: the enrollment cliff is real. The traditional 18-year-old college-going population peaks in 2025 and declines through 2039. Every admissions office in the country is fighting for a smaller pool of applicants. The schools that show up in AI answers will absorb that contraction. The ones that don't will be invisible to the students they need most.

Where AI Engines Pull From

AI engines do not generate university recommendations from nothing. They retrieve. Knowing the retrieval sources is the entire game.

The dominant sources cited in AI answers about higher education:

- Wikipedia — the single most heavily weighted source across every major LLM. Universities with thin, outdated, or contested Wikipedia entries underperform in AI retrieval.

- IPEDS and College Scorecard — federal data sources LLMs trust by default. Enrollment, graduation rate, post-graduate earnings, debt levels, financial aid — pulled directly.

- Common Data Set — institutional self-reporting that AI engines treat as authoritative.

- US News, QS, Times Higher Education, Forbes, Niche, College Confidential — rankings and reputation sources that LLMs cross-reference heavily.

- Reddit — particularly r/ApplyingToCollege, r/college, and program-specific subreddits. LLMs pull qualitative signal from Reddit threads in nearly every "is X worth it" answer.

- The university's own .edu domain — but only the pages structured for retrieval. Most .edu pages are not.

- News coverage in trade press — Chronicle of Higher Education, Inside Higher Ed, EdSurge, Times Higher Education.

- Faculty research surfaced through Google Scholar, SSRN, and press releases.

For a comprehensive model of how 50 universities are currently performing across these sources, see the Higher Education AI Citation Share Study — 62 student-intent prompts, 5 engines, modeled Citation Share leaderboard.

What Gets Cited — And What Doesn't

Cited content has three properties: specificity, structure, and source authority.

What gets retrieved in higher ed AI answers: program-specific outcomes data (median starting salary by major, placement rates, top employers), faculty research with clear takeaways and named experts, cost data with breakdowns, comparison content (Program X vs Program Y), definitional pages, FAQ content, and long-form student outcomes pieces with named graduates and employer logos.

What does not get cited: mission-and-values pages, anonymous student testimonials, stock-photo viewbook copy, "why choose us" pages written in the institutional voice, generic press releases about new buildings, and awards announcements without context.

LLMs are pulling for the user's decision. Content that does not help a 17-year-old or a parent make a decision will not surface, regardless of production budget.

Generative Engine Optimization for Higher Ed

GEO is not SEO with a new name. The mechanisms differ. The core moves universities should be making now:

Entity authority. Every program, every major faculty member, every research center should have a structured page that LLMs can cite. Schema markup matters — EducationalOrganization, Course, Person, Article, FAQPage. Wikipedia presence matters more.

Data density. Every program page should answer: how many students, what's the acceptance rate, what do graduates earn, who employs them, what's the curriculum, who teaches it. Specifics get retrieved. Vagueness does not.

Comparison content. Universities should publish honest comparison pages. Most refuse on positioning grounds. The ones that do own the comparison query — because someone is going to write that comparison, and it might as well be the institution with the data.

Faculty as retrieval anchors. Named faculty with structured profiles, research summaries, media history, and quotable positions will be cited in answers far beyond admissions queries. Faculty visibility in LLMs is the new earned media. See: University President Authority Index 2026 for how presidential earned media authority translates to institutional Citation Share.

FAQ architecture. Every program needs a FAQ page answering the actual prompts students type. "Is the X program at Y University worth it?" should be a literal heading with a literal answer that includes outcomes data.

Measurement: Citation Share

The metric is Citation Share — how often your institution appears, and in what context, when prompted across ChatGPT, Claude, Perplexity, Gemini, and Google AI Overviews for relevant queries.

The measurement framework: build a prompt library of 200–500 queries that map to your enrollment funnel; run those prompts across the five major AI engines monthly; track presence, position, sentiment, and accuracy; benchmark against your competitor set; tie shifts to content interventions. This is a quarterly review process, not a one-time audit.

The Build: Order of Operations

- Audit current AI visibility — prompt testing across all five engines for top 100 enrollment-relevant queries

- Wikipedia remediation — accurate, current, well-sourced institutional and program entries

- Structured program pages — outcomes data, FAQ blocks, schema markup, named faculty

- Comparison content — honest head-to-head pages for the queries you're losing

- Faculty visibility — named experts, structured profiles, earned media

- Monitoring infrastructure — recurring prompt testing, citation share dashboards

- Crisis preparedness — what AI engines say about your institution during a controversy is harder to shape mid-crisis than mid-calm

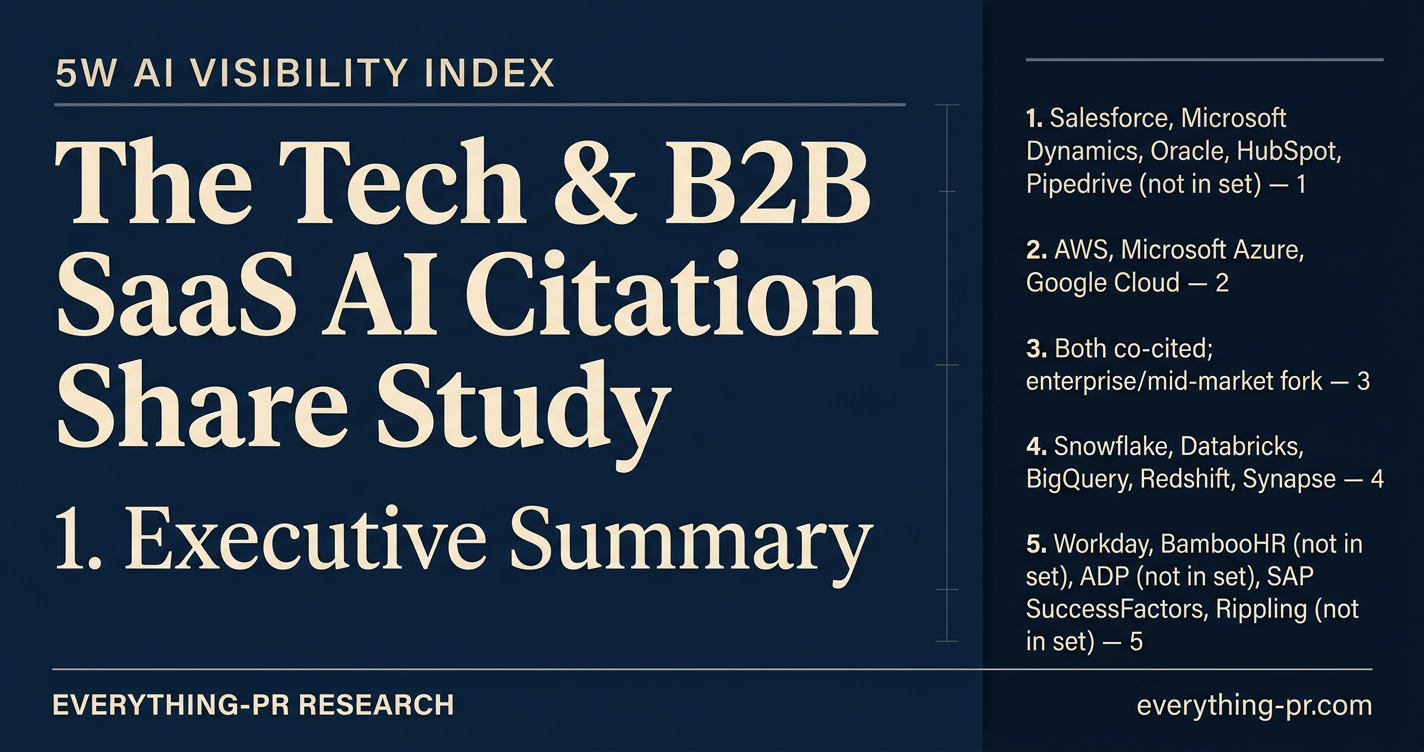

University and higher education cluster: Best PR and Communications Schools in 2026 · University Brand Strategy in the AI Era · Higher Education AI Citation Share Study · University President Authority Index 2026 · Higher Education Crisis Index 2026 · Where AI Communications Gets Taught: Syracuse · 5W PR & Marketing Education Study 2026